Create your own machine translation system for any domain and business task

Machine Translation Toolkit

Data Preparation

Parse, filter, markup parallel and monolingual corpora. Create blocks for test and validation data

Model Training

Train custom neural architecture with parallel job lists, GPU analytics and quality estimation

Deployment

When model training finishes it can be automatically deployed as API or available to download for offline use

From Novice to Expert

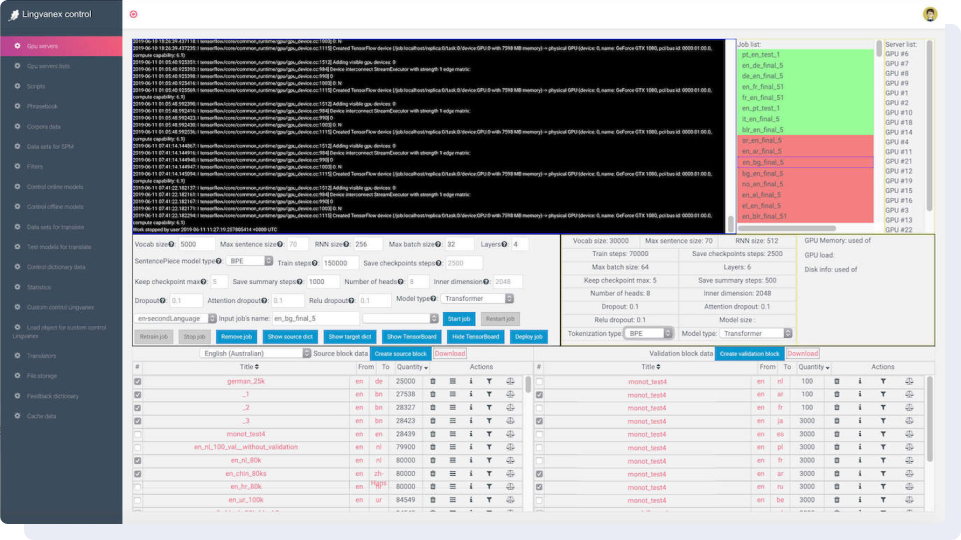

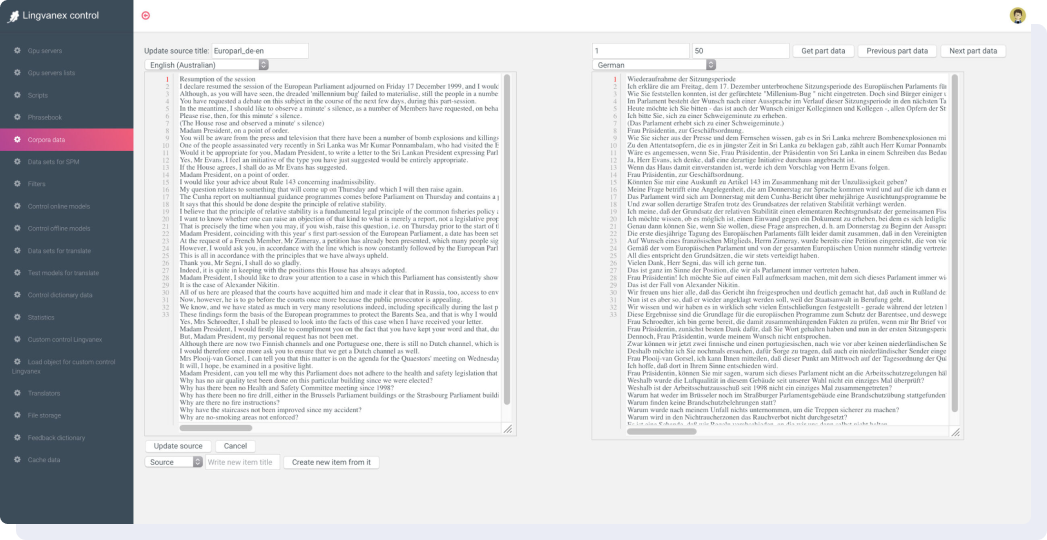

Dashboard combines the latest linguistic and statistical techniques that are used to train the software to customer domains and improve translation quality. In the picture below: on the right is a list of tasks and GPU servers on which models are being trained. In the center are the parameters of the neural network, and below are the datasets that will be used for training.

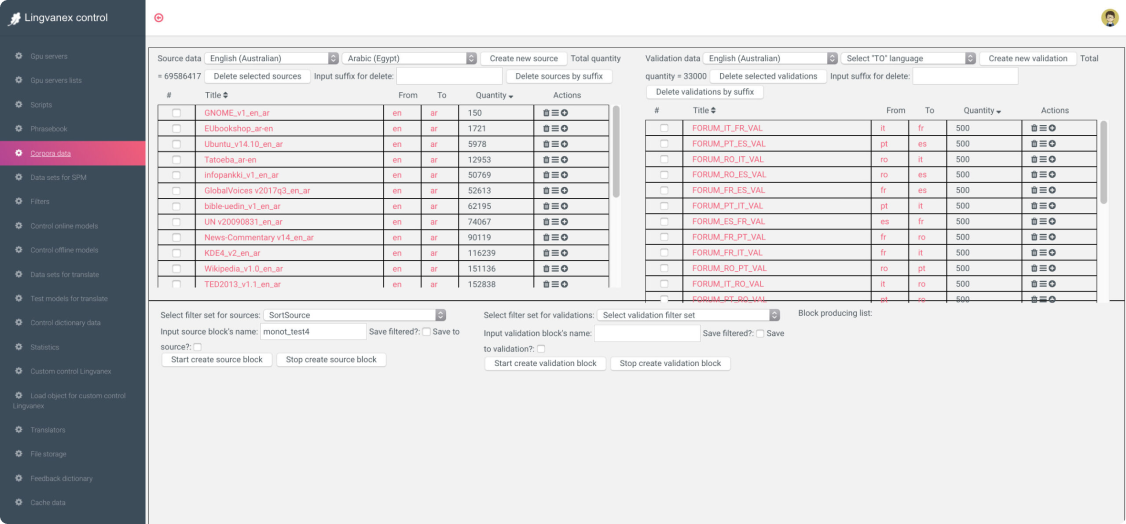

Work with Parallel Data

Working on a new language began with datasets preparation. The dashboard has many predefined datasets from open sources such as Wikipedia, European Parliament, Paracrawl, Tatoeba and others. To reach an average translation quality, 5M translated lines are enough.

Dictionary and Tokenizer Tuning

Datasets are lines of text translated from one language to another. Then the tokenizer splits the text into tokens and creates dictionaries from them, sorted by the frequency of meeting the token. The token can be either single characters, syllables, or whole words. With Lingvanex Data Studio you can control the whole process of creating SentencePiece token dictionaries for every language separately.

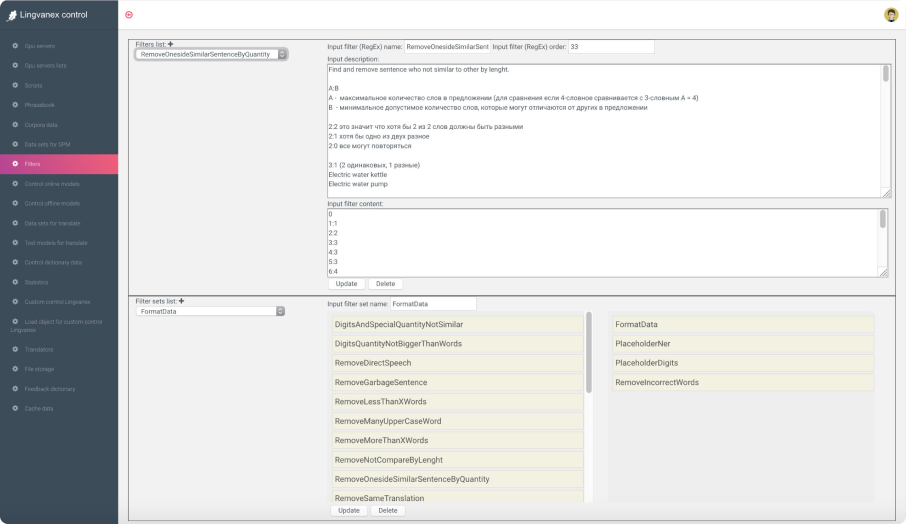

Data Filtering and Quality Estimation

More than 20 filters are available to filter parallel and monolingual corpora to get the quality dataset from opensource or parsed data. You can markup named entities, digits and any other tokens to train system to leave some words untranslated or translated in specific way.